Why this lesson matters

This lesson covers the boundary between local experimentation and safer real-world execution: who gets access, which commands need approval, and why the stricter rule should win.

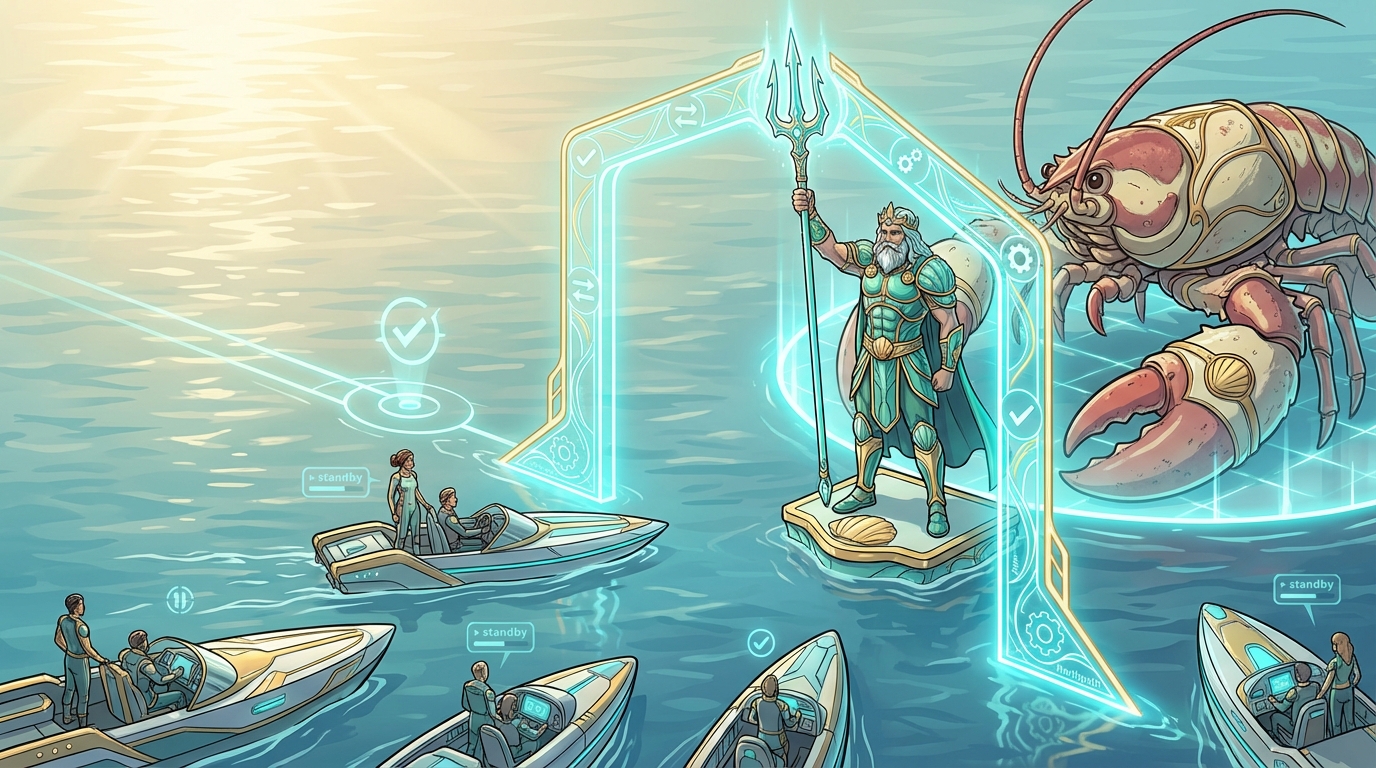

Security boundaries and execution policy

The guardrails behind approvals and device pairing, and how they shape safer command execution in OpenClaw.

Learners preparing to let OpenClaw touch real host file systems, databases, or execute terminal commands.

You do not need to read every page manually. Paste this URL into AI tools such as ChatGPT, Gemini, OpenClaw, or another agent, then use this prompt:

Read this page carefully, summarize the key points, and guide me through the next decision step by step. I want to ask follow-up questions in conversation, and you can also help turn the material into reusable GPTs, Gems, or skills if useful.

Why this lesson matters

This lesson covers the boundary between local experimentation and safer real-world execution: who gets access, which commands need approval, and why the stricter rule should win.

Learning goals

Prerequisites

How to use this lesson

Start with the key ideas, work through the action steps, then use the mistakes, notes, and assignment to turn the lesson into a repeatable habit you can trust later.

Action steps

Before enabling execution tools, decide which directories the agent may read and which actions require explicit human approval.

That turns safety into an operating rule instead of a vague intention.

Observe how tool policy and gateway defaults interact. Create one safe test where the agent requests an action that should still be blocked.

Leave this step with one rule in mind: when safety layers disagree, the stricter rule should win.

Reframe device pairing as owner-approved identity access. It is not just a convenience step for connecting another device.

This shifts attention from only 'what command ran' to the equally important question of 'who was allowed to initiate it'.

Write down which actions stay behind human approval, which identities require pairing, and which environments must stay completely off-limits.

That note becomes the bridge from lesson content to a repeatable operating habit.

The approvals documentation describes approvals as a hard guardrail for allowing a sandboxed agent to run commands on a real machine. That is the right mental model to keep.

Once an agent can change a real host, the stakes are different. The question is no longer just whether the task succeeds, but whether it stays controlled and reversible if something goes wrong.

Treat approvals as part of responsible delegation. The goal is not speed without oversight. The goal is clear, reviewable execution on a real system.

The official documentation highlights an important design rule: effective behavior is determined by the stricter of the tool request and the gateway approval defaults.

That prevents accidental over-permission. If a tool request is looser than your gateway rules, the stricter gateway rule still wins.

This is a useful system-design habit beyond OpenClaw as well: when safety layers disagree, the safer rule should prevail.

The pairing docs cover both DM pairing and device pairing. In plain terms, pairing is how the owner decides who is allowed to connect to the system in the first place.

That matters because safety starts before the command itself. It begins at the identity and access layer, not only at the moment an agent tries to run something risky.

Command safety is not an afterthought to add later. It belongs in the design before the workflow touches anything important.

If you wait until the system is already touching customer files, production data, or important host directories, you are making trust decisions too late.

That is why this lesson closes the Foundations path. Setup, architecture, and browser discipline come first. Only after that should you widen access on a real host.

Common mistakes

Why this matters in real work

Approval rules and pairing habits are what turn a demo into a workflow people can trust.

Clear boundaries make future reviews, handoffs, and client conversations easier.

Assignment

Key takeaway

The most durable OpenClaw workflow is not the fastest one. It is the one with clear approval boundaries, deliberate identity pairing, and a trust model that fails safely.

If you want a fuller operating guide after this lesson, continue with OpenClaw Playbook One.

Sources and references

In this course

Related guides

Read the related guide and course overview if you want broader context around safety, workflow design, and the rest of the learning path.

Previous lesson

How OpenClaw's managed browser works, why manual login is the safer default, and how to respond when a site pushes back.

Go to previous lessonNext lesson

This is currently the final published lesson in the sequence.